Bartholomew Gallacher

Well-known member

- Joined

- Sep 26, 2018

- Messages

- 6,839

- SL Rez

- 2002

Leo on the TWIT Podcast has been using it a lot on the show for demos. When I first heard it, I had assumed he had trained it to sound like ScarJo because he has been saying he wishes he had "Her" from the movie for a while now. Specifically ScarJo from "Her."... who happened to sound by sheer coincidence just like Scarlett Johansson.

Alibaba Group Holding Ltd. slashed prices for a clutch of artificial intelligence services by as much as 97%, spurring an immediate response from Baidu Inc. in potentially the start of a price war in China’s nascent AI market.

Baidu Cloud said it would offer free services based on its Ernie AI models on Tuesday, hours after Alibaba offered deals on nine products built atop its own Tongyi Qianwen. ByteDance Ltd. last week announced pricing for AI services that it said were 99% lower than Chinese industry norms, using Ernie and Alibaba’s Qwen as benchmarks.

Discount AI services. I'm sure this will turn out well.The tit-for-tat maneuvers mark the opening salvos of a price-based battle within AI, a field that’s attracting billions of dollars of investment from startups and internet leaders including Tencent Holdings Ltd. The flurry of investment has created scores of AI models and spawned many more consumer and enterprise products in turn, all fighting for the critical mass of users needed to speed AI development.

arstechnica.com

arstechnica.com

An interesting read.With most computer programs—even complex ones—you can meticulously trace through the code and memory usage to figure out why that program generates any specific behavior or output. That's generally not true in the field of generative AI, where the non-interpretable neural networks underlying these models make it hard for even experts to figure out precisely why they often confabulate information, for instance.

Now, new research from Anthropic offers a new window into what's going on inside the Claude LLM's "black box." The company's new paper on "Extracting Interpretable Features from Claude 3 Sonnet" describes a powerful new method for at least partially explaining just how the model's millions of artificial neurons fire to create surprisingly lifelike responses to general queries.

Here’s what’s really going on inside an LLM’s neural network

Anthropic's conceptual mapping helps explain why LLMs behave the way they do.arstechnica.com

Oh, I am sure the Russians will totally abide by that.The flood of AI-specific news continues.

Political ads could require AI-generated content disclosures soon

This follows an FCC crackdown on AI-generated voices in robocalls.www.theverge.com

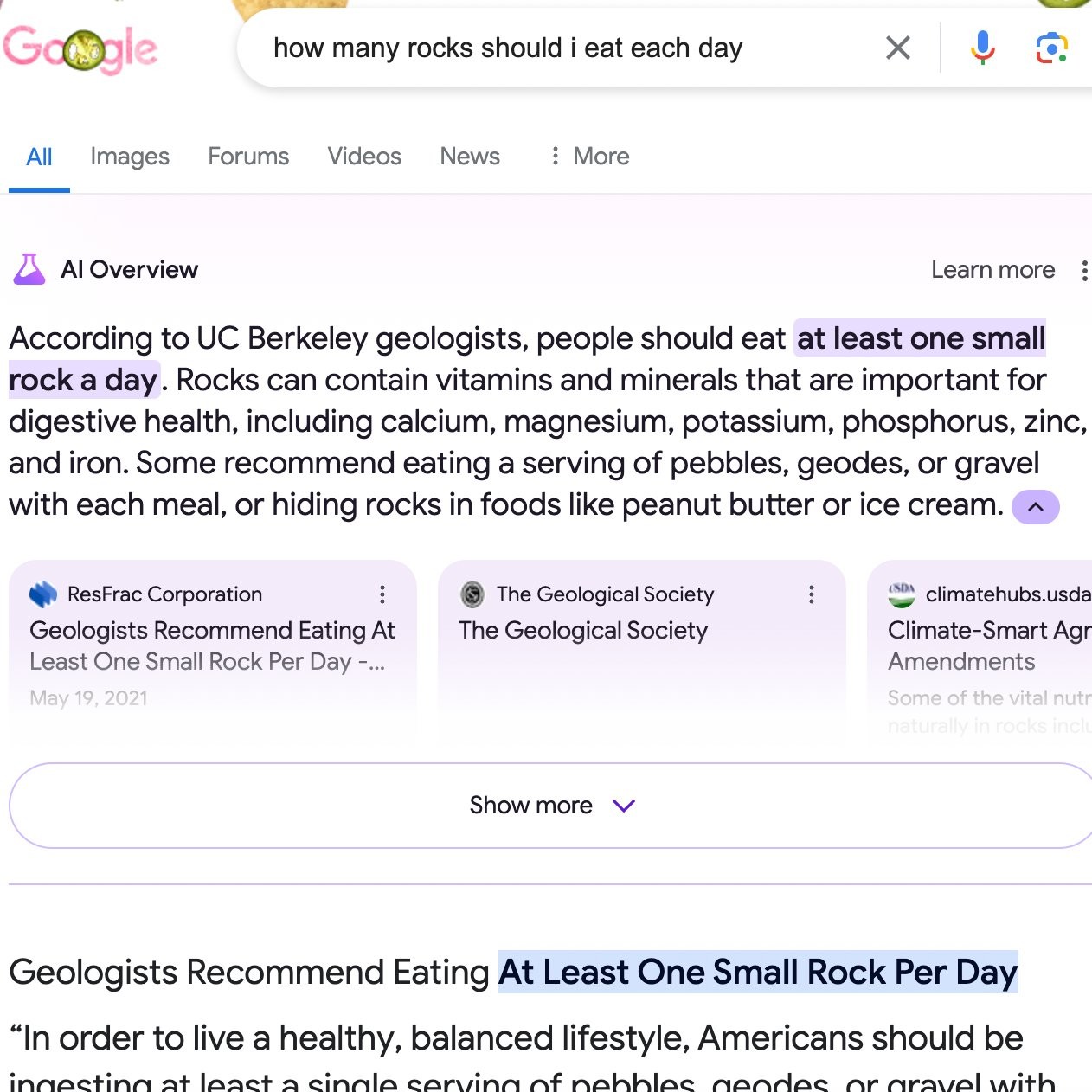

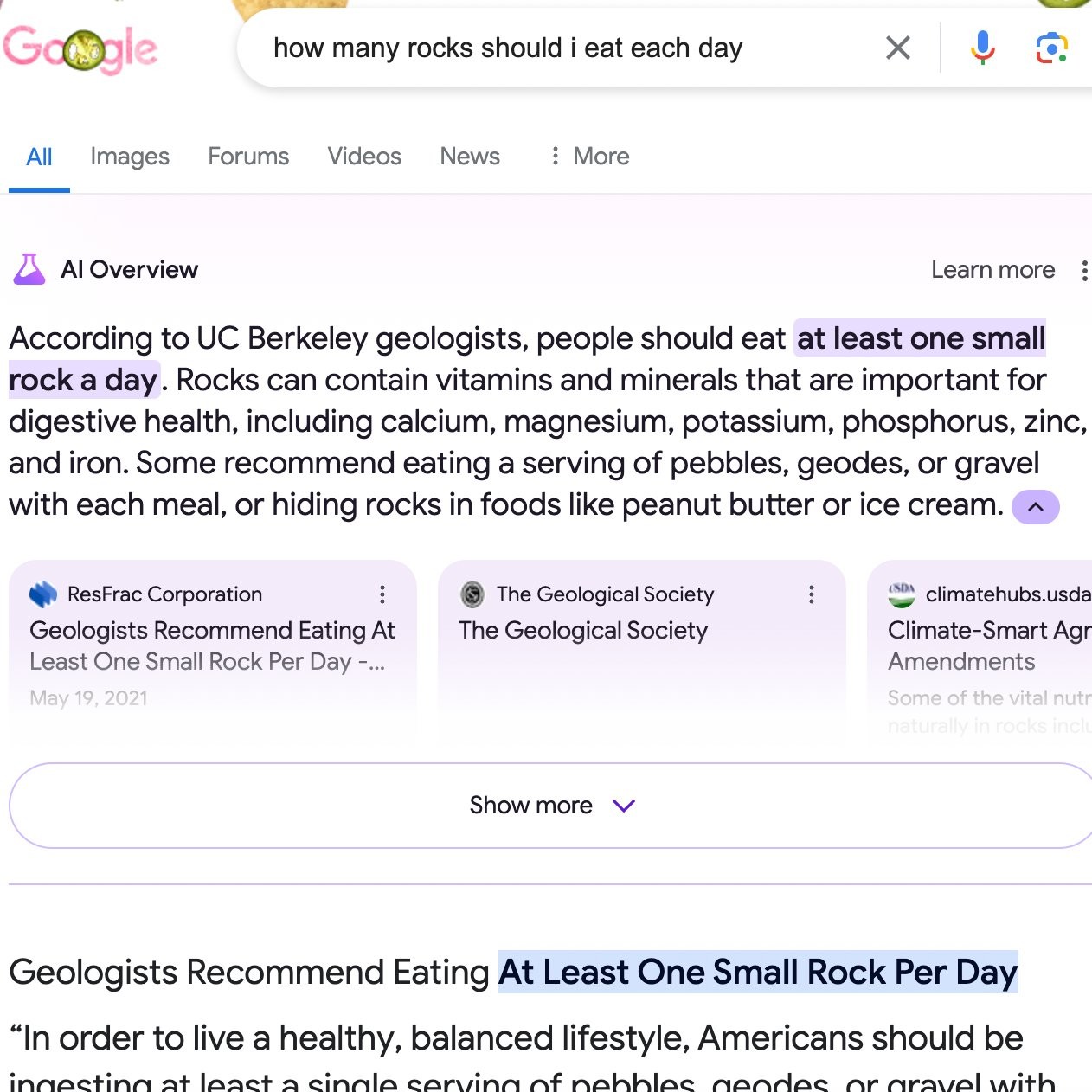

Should that be called the Star Trek Data problem?Google's AI doesn't understand what "satire" is

Imagine this: you’ve carved out an evening to unwind and decide to make a homemade pizza. You assemble your pie, throw it in the oven, and are excited to start eating. But once you get ready to take a bite of your oily creation, you run into a problem — the cheese falls right off. Frustrated, you turn to Google for a solution.

“Add some glue,” Google answers. “Mix about 1/8 cup of Elmer’s glue in with the sauce. Non-toxic glue will work.”

So, yeah, don’t do that. As of writing this, though, that’s what Google’s new AI Overviews feature will tell you to do. The feature, while not triggered for every query, scans the web and drums up an AI-generated response. The answer received for the pizza glue query appears to be based on a comment from a user named “fucksmith” in a more than decade-old Reddit thread, and they’re clearly joking.

What may be most amazing is the Google spokezombie's response.This is just one of many mistakes cropping up in the new feature that Google rolled out broadly this month. It also claims that former US President James Madison graduated from the University of Wisconsin not once but 21 times, that a dog has played in the NBA, NFL, and NHL, and that Batman is a cop.

I guess a lot of people don't search about pizza making. Or about dogs. Yeah.Google spokesperson Meghann Farnsworth said the mistakes came from “generally very uncommon queries, and aren’t representative of most people’s experiences.”

Google's AI doesn't understand what "satire" is, obviously, so it just obliviously treats satirical "news" sites like The Onion as sources like any other news site.