- Joined

- Sep 20, 2018

- Messages

- 23,676

- SLU Posts

- 18459

Depending on which subReddits they train the AI with, this could get rather interesting.

www.wheresyoured.at

www.wheresyoured.at

We're just over a year into the existence (and proliferation) of ChatGPT, DALL-E, and other image generators, and despite the obvious (and reasonable) fear that these products will continue to erode the foundations of the already unstable economies of the creative arts, we keep running into the problem that these things are interesting, surprising, but not particularly useful for anything.

Sora's outputs can mimic real-life objects in a genuinely chilling way, but its outputs — like DALL-E, like ChatGPT — are marred by the fact that these models do not actually know anything. They do not know how many arms a monkey has, as these models do not "know" anything. Sora generates responses based on the data that it has been trained upon, which results in content that is reality-adjacent, but not actually realistic. This is why, despite shoveling billions of dollars and likely petabytes of data into their models, generative AI models still fail to get the basic details of images right, like fingers or eyes, or tools.

These models are not saying "I shall now draw a monkey," they are saying "I have been asked for something called a monkey, I will now draw on my dataset to generate what is most likely a monkey." These things are not "learning," or "understanding," or even "intelligent" — they're giant math machines that, while impressive at first, can never assail the limits of a technology that doesn't actually know anything.

Despite what fantasists may tell you, these are not "kinks" to work out of artificial intelligence models — these are the hard limits, the restraints that come when you try to mimic knowledge with mathematics. You cannot "fix" hallucinations (the times when a model authoritatively tells you something that isn't true, or creates a picture of something that isn't right), because these models are predicting things based off of tags in a dataset, which it might be able to do well but can never do so flawlessly or reliably.

What you're asking for is AI, as in Actual Intelligence.Basically, let me know when AI can be told (to use the example above), "Draw a Monkey" with no training data aside from maybe basic English understanding. Let it learn what a monkey is, and what drawing is, etc. make it actually "intelligent".

www.platformer.news

www.platformer.news

I've always thought that students are asked to write essays not because the instructor is particularly interested in what they have to say but because the discipline of sitting down, planning, writing and revising an essay forces you to think about the topic in hand far more thoroughly than otherwise you would do, and is thus a learning experience.This appears to be the semester that I start to get a flood of ChatGPT papers turned in. I could refer students for academic violations, but it turns out that AI does a terrible job of meeting assignment requirements and I can just give them failing marks without all the hassle.

You don't know? Yes Free Xue. Chatbox is an alias. I could be wrong but all I can say is this is a long time member of sluniverse / VVO that has not been active in quite a long time.Is Chatbot an alias, Chatbox?

Uh, I know that. I've been here all that time. I'm sure you've seen me here.You don't know? Yes Free Xue. Chatbox is an alias. I could be wrong but all I can say is this is a long time member of sluniverse / VVO that has not been active in quite a long time.

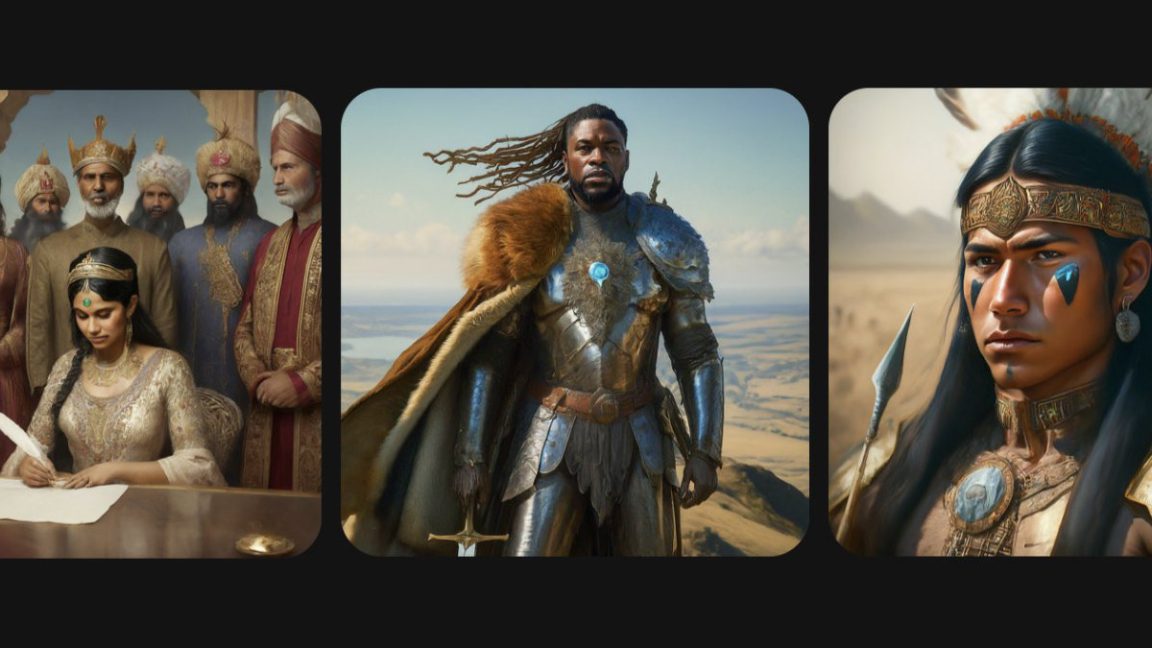

Google's text to image AI producing some pictures of 1943 Wehrmacht soldiers, what could go wrong?

arstechnica.com

arstechnica.com

On Thursday morning, Google announced it was pausing its Gemini AI image-synthesis feature in response to criticism that the tool was inserting diversity into its images in a historically inaccurate way, such as depicting multi-racial Nazis and medieval British kings with unlikely nationalities.

"We're already working to address recent issues with Gemini's image generation feature. While we do this, we're going to pause the image generation of people and will re-release an improved version soon," wrote Google in a statement Thursday morning.

As more people on X began to pile on Google for being "woke," the Gemini generations inspired conspiracy theories that Google was purposely discriminating against white people and offering revisionist history to serve political goals. Beyond that angle, as The Verge points out, some of these inaccurate depictions "were essentially erasing the history of race and gender discrimination."